How hard is constraint programming?

I’ve been writing code for the Z3 SMT solver for several months now. Here are my findings.

Python is used here as the base language. Python/Z3 feels like a two-layer programming model—declarative code for Z3, imperative code for Python. In this it seems reminiscent of C++/CUDA programming for NVIDIA GPUs—in that case, mixed CPU and GPU imperative code. Either case is a clever combination of methodologies that is surprisingly fluent and versatile, albeit not a perfect blend of seamless conceptual cohesion.

Other comparisons:

- Both have two separate memory spaces (CUDA CPU/GPU memories for one; pure Python variables and Z3 variables for the other).

- Both can be tricky to debug. In earlier days, CUDA had no debugger, so one had to fall back to the trusty “printf” statement (for a while it didn’t even have that!). If the code crashed, you might get no output at all. To my knowledge, Z3 has no dedicated debugger. If the problem being solved comes back as satisfiable, you can print out the discovered model variables, but if satisfiability fails, you get very little information. Like some other novel platforms, something of a “black box.”

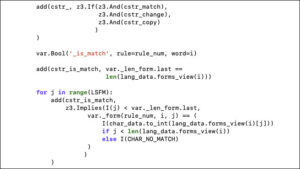

- In both cases, programmer productivity can be well-served by developing custom abstractions. I developed a Python class to manage multidimensional arrays of Z3 variables, this was a huge time saver.

There are differences too, of course.

- In Python, “=” is assignment, but in Z3, one only has “==”, logical or numeric equality, not assignment per se. Variables are set once and can’t be changed—sort of a “write-once variables” programming model—as is natural to logic programming.

- Code speed optimization is challenging. Code modifications for Z3 constraints/variables can have extreme and unpredictable runtime effects, so it’s hard to optimize. Z3 is solving an NP-complete problem after all, so runtimes can theoretically increase massively. Speedups can be massive also; one round of changes I made gave 2000X speedup on a test problem. Runtime of CUDA code can be unpredictable to a lesser degree, depending on the PTX and SASS code generation phases and the aggressive code optimizations of the CUDA compiler. However, it seems easier to “see through” CUDA code, down to the metal, to understand expected performance, at least for smaller code fragments. The Z3 solver can output statistics of the solve, but these are hard to actionably interpret for a non-expert.

- Z3 provides many, many algorithmic tuning parameters (“tactics”), though it’s hard to reason about which ones to pick. Autotuners like FastSMT might help. Also there have been some efforts to develop tools to visualize the solve process, this might be of help.

It would be great to see more modern tooling support and development of community best practices to help support Z3 code developers.